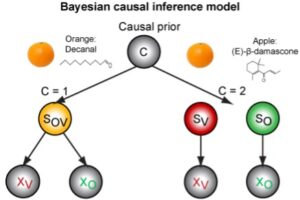

Humans integrate sensory stimuli from the physical (e.g. sight, hearing, touch) and chemical senses (e.g. smell, taste) into a coherent multisensory perception of their environment and evaluate it. For example, we perceive food based on its appearance and odour and evaluate whether we like a food or not. However, our brain should only integrate and evaluate multisensory stimuli that can originate from a common cause: When seeing and smelling food, the brain must first infer whether the appearance and smell were actually caused by a single food, and not by different foods or other odour sources. This project uses psychophysical, psychophysiological and EEG methods to investigate how humans infer the causal structure of olfactory-visual food stimuli, integrate or segregate the stimuli and finally evaluate their multisensory pleasantness. The results will significantly expand our understanding of how the brain forms, evaluates and expresses the multisensory perception of food.

Duration of the project: 2023-2026

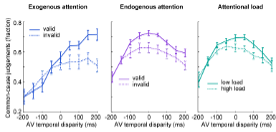

In our everyday environment, our brain constantly combines a multitude of multisensory stimuli into a coherent multisensory perception of the environment. For example, we correctly perceive which voices belong to which faces at a party. In order to link the stimuli veridically, the brain should only combine stimuli from one source (such as a speaker). However, the brain only has limited attentional capacities to process the multisensory stimuli and to focus on relevant stimuli (e.g. the conversation partner). The brain therefore has to solve two challenges: First, it must infer the causal structure of the multisensory stimuli in order to integrate them in the case of a common cause or to segregate them in the case of independent causes. Second, the brain must use selective attention to focus its limited attentional resources to relevant stimuli in the competition of multisensory stimuli. The current project investigates the interplay of multisensory causal inference and attention in audiovisual perception using psychophysics, electroencephalography (EEG) and Bayesian computational modelling.

Project duration: 2024-2027

In everyday life, our brain continuously combines information from different senses to form a coherent overall picture – for example, in a noisy environment, we associate a speaker’s voice with the corresponding lip movements. To ensure that our perception remains reliable, the brain should only combine sensory information if they are likely to originate from the same source, while signals from independent sources are processed separately. Previous research has provided important insights into this, but has mostly focused on short, rather static stimuli. In the real world, however, perception takes place in dynamic situations in which stimuli are constantly changing and the brain can collect information over time to reduce uncertainty. For example, at a party with many people talking, the brain can collect information over long periods of time to decide which voice belongs to which face.

In this project, we use audiovisual perception experiments, neuroimaging (EEG & fMRI) and computational models to investigate how the brain weighs competing explanations for audiovisual sensory impressions and makes a decision to integrate or segregate sensory information. Using a time-resolved, model-based approach, we aim to better understand how the brain achieves stable multisensory perception in complex, rapidly changing environments.

Duration: 2026-2029